The Ethical Challenges of Generative AI Applications

Generative AI (GenAI) techniques utilizing deep learning, neural networks, and other sophisticated machine learning have enabled tremendous advances in applications like computer vision, natural language processing, creative content generation, and more. However, the powerful capabilities of GenAI also raise critical ethical concerns regarding bias, transparency, accountability, and unintended societal consequences that must be proactively addressed as adoption continues growing rapidly.

One report breaks the types of ethical and moral concerns from AI into eight categories:

- Lack of explainability

- Incorporation of human bias

- Inherent English and European bias of training data

- Lack and impact of regulation, now and in the future

- Fear of apocalyptic outcomes

- Lack of accountability by developers

- Impact to the environment

- Effect on labor, both in the data mines and the coming battlefield of machine versus human productivity

This post provides an in-depth look at a handful of these key ethical issues posed by GenAI applications, highlighting recent problems, and analyzing potential solutions that allow the technology to be harnessed responsibly.

Bias in GenAI Models

One of the most pressing issues with GenAI systems is the perpetuation of unfair bias based on flaws in the training data or algorithms. Without careful consideration, models can learn and amplify existing societal biases around race, gender, age, ethnicity and more from biased data. This leads to discriminatory and prejudicial outcomes that can severely impact individuals and groups.

The below graphic from CSA Research illustrates how training data can lead to different types of bias in models. What makes this even more difficult to pin down is that most model developers never release even the metadata of the training data — if anything is open sourced, it is usually the fine-tuning data, which is highly curated and not representative of the full training set.

How does this bias in the training data manifest in AI? Facial analysis models have demonstrated higher error rates for women and darker-skinned individuals. Hiring algorithms have disadvantaged qualified candidates from minority backgrounds. Predictive policing systems exhibit racial biases, leading to over-policing in marginalized communities.

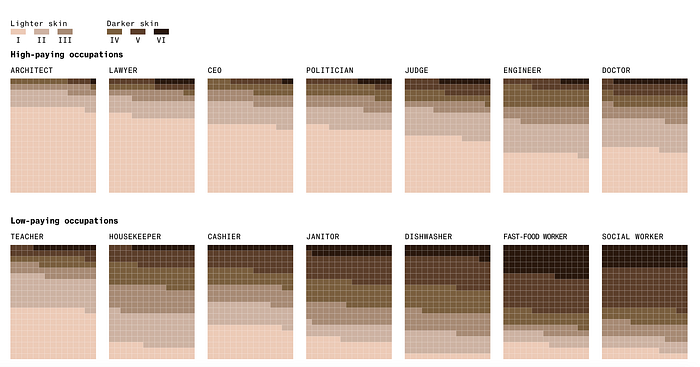

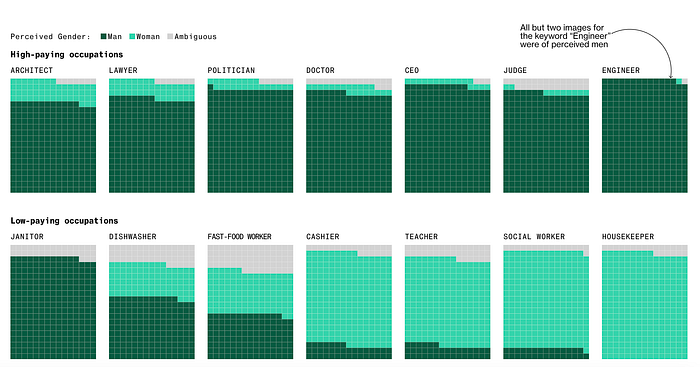

If we look at generative AI in particular, Bloomberg conducted a study analyzing the outputs of Stable Diffusion text-to-image models and concluded, “The world according to Stable Diffusion is run by white male CEOs. Women are rarely doctors, lawyers or judges. Men with dark skin commit crimes, while women with dark skin flip burgers.

Stable Diffusion generates images using artificial intelligence, in response to written prompts. Like many AI models, what it creates may seem plausible on its face but is actually a distortion of reality. An analysis of more than 5,000 images created with Stable Diffusion found that it takes racial and gender disparities to extremes — worse than those found in the real world.” (Link)

Mitigating bias requires a multi-pronged approach. Some considerations include:

- Preparing training data: Curating data carefully, enhancing diversity, and correcting imbalances help reduce baked-in bias.

- Fairness metrics: Rigorously defining, evaluating, and optimizing for fairness measures can quantify and mitigate algorithmic biases.

- Techniques like adversarial debiasing explicitly optimizes models to reduce learned biases.

- Inclusive design from the beginning can ensure that other use cases are considered. If data is primarily sourced from

- Testing models in different environments. One framework that the World Economic Forum offers is STEEPV. “STEEPV analysis is a method of performing strategic analysis of external environments. It is an acronym for social (i.e. societal attitudes, culture and demographics), technological, economic (ie interest, growth and inflation rate), environmental, political and values. Performing a STEEPV analysis will help you detect fairness and non-discrimination risks in practice.” (Link)

- Increasing diversity of data and teams building GenAI systems also helps offset biases. Many leading companies also have specific responsible AI teams or an AI ethics office that are engaged in the end-to-end process.

There is no straightforward answer to solving these challenges, but a mix of these methods is starting to result in a set of best practices that work toward mitigating bias.

Lack of Explainability and Interpretability

With complex GenAI models like deep neural networks, it is often not possible to fully explain the rationale behind their decisions and predictions. However, understanding model behaviors, especially for sensitive applications in areas like finance, healthcare and criminal justice, is essential to ensure transparency, trace accountability, and avoid harm.

Several approaches to enhance interpretability are being explored:

- Local explainability focuses on explaining the reasons for specific individual predictions. This fosters user confidence in the system.

- Rule-based explanations approximate the model logic through more interpretable rules and logical statements.

- Highlighting influential input features helps users understand key data driving model outputs.

- Model visualization techniques also provide valuable insights into model mechanics.

Legal and Regulatory Challenges

GenAI systems must adhere to existing regulations around data privacy, consumer protections and anti-discrimination laws. However, the rapid evolution of AI capabilities presents policy challenges. Key steps needed include:

- Developing techniques like federated learning and differential privacy to preserve user privacy.

- Establishing rigorous internal governance procedures for responsible data collection and use practices.

- Advocating for regulations specific to GenAI systems, focused on transparency and accountability.

- Adhering to ethical principles and frameworks on fairness and bias mitigation proposed by organizations like the EU and OECD.

Handling Hallucinations and Unintended Harmful Effects

A known issue with large language models is the propensity to generate false information or content that promotes harmful stereotypes due to lack of real world knowledge and common sense. For instance, ChatGPT has produced toxic text, harmful misinformation and biased narratives when prompted.

Potential solutions to mitigate the risk of societal harm include:

- Adversarial testing to catch flaws and make models more robust.

- Incorporating human feedback loops to correct bad behavior and guide the model.

- Monitoring deployments and having human oversight over high-risk applications.

The Path Forward for Ethical GenAI

Addressing the multifaceted ethical challenges posed by advancing GenAI requires sustained engagement between stakeholders from research, policy, industry and civil society. Key focal areas for enabling responsible GenAI include:

- Promoting participation of diverse voices in GenAI development and governance.

- Supporting ongoing research to improve techniques and best practices for bias detection and mitigation, explainability, transparency, and error handling.

- Advocating for regulatory policy development to ensure accountability and prevent harm from unchecked GenAI deployment.

- Incentivizing ethical engineering practices focused on safety, transparency and fairness throughout the GenAI model lifecycle.

With proactive efforts to put ethics at the core of the GenAI application development process, this transformative technology can uplift society in countless ways while minimizing risks from misuse and unintended consequences. All stakeholders have a shared responsibility to steer the GenAI revolution towards just and beneficial outcomes for all.

Conclusion

GenAI innovation carries tremendous potential for positive impact but also poses novel challenges we must thoughtfully address. This will require sustained collaboration and open dialogue between all parties to establish norms, incentives, guidelines and technical standards that enable GenAI technology to be deployed safely, ethically and for the benefit of humanity as a whole. If we make the necessary investments and tradeoffs, the GenAI applications of the future will represent the best of human values like compassion, creativity and justice.